A multimodal AI agent for parents and teachers.

An AI system that turns a single educational moment into two outputs: instant retrieval-backed guidance for the parent, and a personalized narrated story for the child. Currently deployed at Apple Montessori in New Jersey.

Fast guidance first. Full lesson second.

Two user-speed lanes instead of one long blocking workflow.

Retrieval-backed parent guidance returned while the full lesson is still generating.

Narrated, illustrated story generation with durable state, moderation gates, and retry recovery.

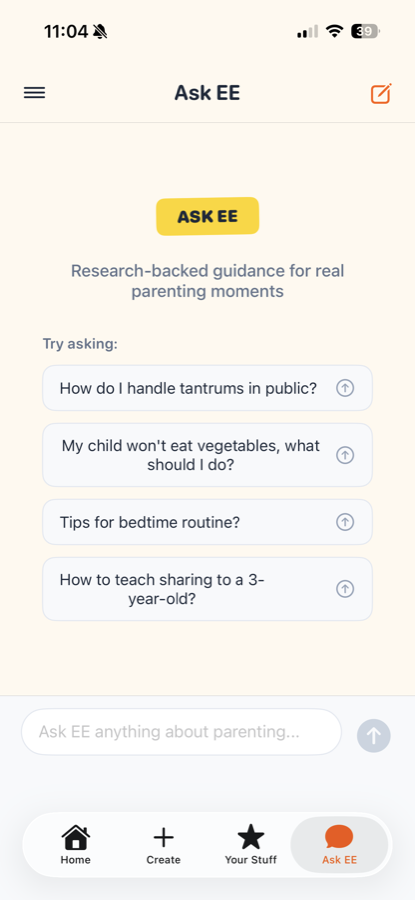

Generation is too slow for the "my kid is melting down right now" use case. Retrieval over a curated corpus returns grounded help in seconds, not minutes.

TTS or image generation can fail mid-pipeline. Jobs persist state at each stage so the worker can resume from the last checkpoint instead of restarting.

Content safety runs as a gate between generation and delivery — not a post-hoc filter. Stories that fail safety checks never reach TTS.

End-to-end production stack.

Six layers from client to infrastructure, each handling a specific concern.

SwiftUI with auth gating and async job UX

Handles session readiness, request shaping, polling, and smoothed progress so the experience feels responsive while generation runs off-request.

Supabase auth, edge ingress, and storage

Edge Functions own low-latency ingress, user validation, and secure handoff into the async runtime.

Postgres + pgvector for grounded guidance

Generates embeddings, runs RPC similarity search over a curated parenting corpus, and returns structured, retrieval-backed help.

FastAPI worker with durable job state

Queued jobs with progress updates, moderation gates, graceful degradation, and retry handling across generation stages.

Multi-modal story and lesson generation

Model outputs become typed intermediate artifacts, then turn into narration and visuals while preserving the educational lesson and age fit.

Cloud deploy, push notifications, asset CDN

Separates fast edge interactions from long-running compute. Assets are stored and pushed to the mobile client on completion.

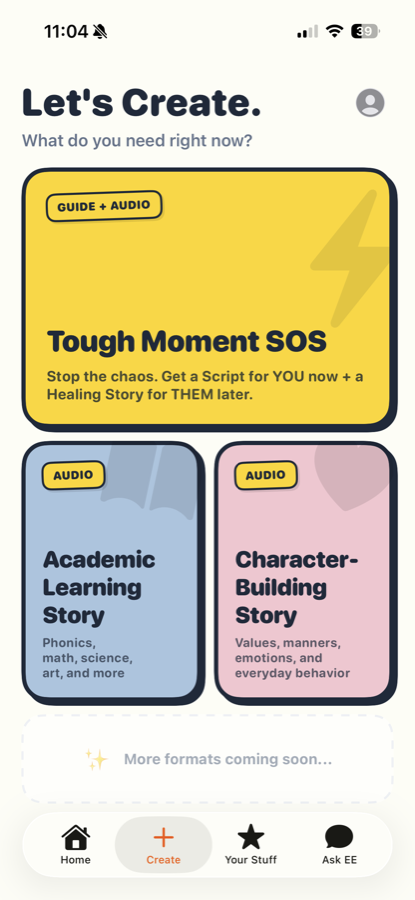

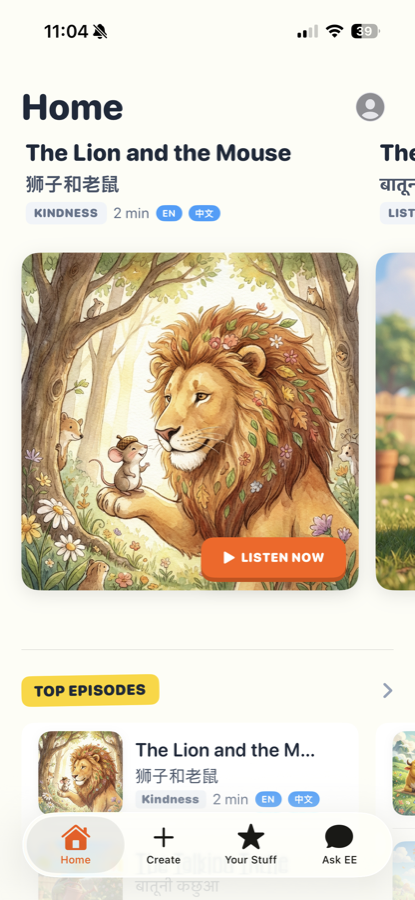

What the product looks like in use.

A fast guidance lane up front, then a replayable library of narrated lessons.